From Caviar to Flour: How AI Got Cheap, Smart, and Everywhere

A Jugalbandi between Claude and NotebookLM

A list is rarely an essayist’s friend. Perhaps useful in other contexts, lists can be limiting while writing an essay or structuring a presentation. If you love digressions, you’ll know what I mean. Lists can be easier to consume, but striving for that goal while not using them is more challenging, hence more fun. The New Yorker does not feature lists (unless you’re reading Shouts & Murmurs), and The Economist camouflages them across paragraphs.

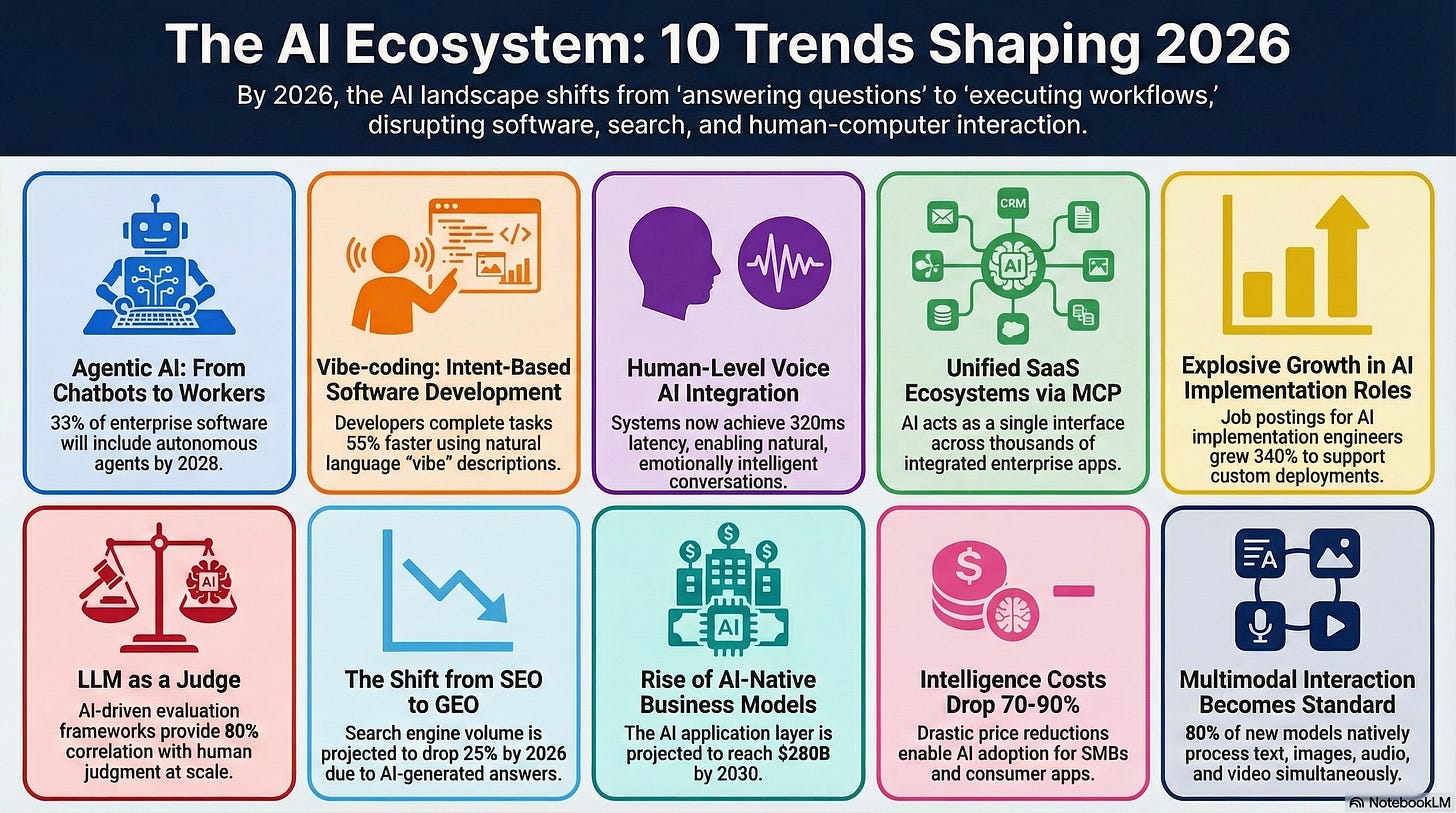

Every once in a while, though, I relent. This happened recently when I presented, to my leadership team colleagues, a short overview of the latest tech trends in AI. The pace of change in AI is hard to keep up with; a quick roundup of recent trends would get this team up to speed, I thought. The focus was on tech trends, not business, economic, or cultural ones driven by AI.

What emerged was a list. The AI tools I consulted (ChatGPT, Gemini, Claude) came up with different lists for the same prompt, so I curated my own top ten. Following the presentation & demo session, a blog post on the theme seemed like a good way to mark the zeitgeist. I asked Claude to expand on each item on the list, and used NotebookLM to fine-tune Claude’s response.

Top ten AI trends: A Jugalbandi between Claude and NotebookLM

1. The Autonomy Era: Agentic AI and the Death of the Interface

The strategic center of gravity has shifted toward “Agentic AI.” Unlike the reactive, prompt-dependent models of 2023, these systems possess the ability to plan, use tools, and execute multi-step workflows with minimal human oversight. We are moving from a world of “Ask and Receive” to one of “Set Goal and Observe.”

The Intern Who Never Sleeps: AI that doesn’t just draft your email but books the flight, files the expense report, and argues with the airline—all while you’re busy having a life.

The core differentiator is the ability to interact directly with APIs and execute plans. This poses an existential threat to traditional SaaS companies that have built their moats around manual user interfaces (UIs). If an agent can bypass the dashboard and perform the task via an API, the “interface” becomes a vestigial organ. Forward-thinking giants like Salesforce and Microsoft are already pivoting, transitioning from platforms you log into to orchestrators that work for you.

The autonomy of these agents is being fueled by a radical change in how we build them, moving from rigid syntax to “Vibe-coding.”

2. The Architect’s Renaissance: Vibe-Coding and High-Level Abstraction

Software development is undergoing its most profound abstraction shift since the invention of the compiler. “Vibe-coding” prioritizes intent over syntax, allowing developers—and increasingly, non-developers—to describe a desired outcome in natural language while the AI manages the implementation.

Grammar is Dead, Vibes are Forever: Software engineering where “I want it to look like a sunset and feel like a fast car” is a valid pull request.

The skill mix is shifting. Navigating APIs is becoming obsolete; the new premium is placed on understanding system architecture and mastering “prompt engineering.” This isn’t just a theoretical shift—GitHub reports that developers using AI tools complete tasks 55% faster, and a 2024 Stack Overflow survey found that 76% of developers are already using or planning to use AI coding tools. The barrier to entry for creating complex software is effectively collapsing.

3. The End of the Hold Music: Human-Grade Voice AI

Voice AI has finally crossed the uncanny valley, achieving near-zero latency and emotional intelligence. With OpenAI’s Advanced Voice Mode hitting 320ms latency, we have reached the threshold of natural human conversation. This is a direct challenge to the $280B Business Process Outsourcing (BPO) industry.

The Customer Service Rep with No Coffee Break: An AI that actually understands your frustration—and has the emotional intelligence to fix it before you can even ask for a manager.

The efficiency gains are staggering. Klarna recently replaced the work of 700 agents with an AI assistant that handled 2.3 million conversations. Not only was customer satisfaction maintained, but resolution times plummeted from 11 minutes to just two.

4. The Unified Interface: MCP and the SaaS Ecosystem Integration

The Model Context Protocol (MCP) is dismantling enterprise data silos and turning LLMs into central operating systems. By allowing models to pull data from Slack, update Jira, and schedule meetings in one conversation, the “SaaS sprawl” is being consolidated into a single interface.

The Great API Peace Treaty: Because your tech stack shouldn’t require a diplomatic envoy just to move a Jira ticket into a Slack channel.

Since its release in late 2024, over 1,000 MCP servers have been created. The strategic reality is clear: users no longer want to hunt through fifteen different SaaS apps; they want one interface that speaks to everything. SaaS companies that fail to integrate with protocols like MCP risk becoming “invisible.”

This complex web of integration is creating a new demand for human expertise in the form of Forward Deployed Engineers.

5. High-Tech, High-Touch: The Rise of the Forward Deployed Engineer (FDE)

There is a profound irony in the AI boom: the more advanced the AI, the more human “boots on the ground” are required to make it work. The “Forward Deployed Engineer” is a hybrid coder-consultant embedded with customers to ensure AI actually reaches production-grade maturity.

The AI Sherpa: Part coder, part consultant, fully embedded in your office until the ‘magic’ actually works in production.

Adopted from the “Palantir Model,” companies like OpenAI and Anthropic are increasingly adopting this high-touch approach. Job postings for these roles grew 340% in 2024, reflecting a reality where enterprise AI implementation cycles still take 6-12 months. The strategic reason? AI tooling isn’t yet mature enough for pure self-service deployment; “out of the box” is still a myth for the enterprise.

The difficulty of implementation is matched only by the difficulty of measurement, leading to new ways of “grading” AI.

6. The Mirror Test: Evaluation Frameworks and ‘LLM as a Judge’

As AI outputs scale, human review becomes a bottleneck. The industry has turned to “LLM as a judge,” where advanced models evaluate the performance of other models across dimensions like helpfulness and honesty.

The AI Grading the AI’s Homework: Because humans are too slow, we’ve hired a robot to tell us if the other robot is hallucinating.

While this introduces a “circularity” risk—where models share the same systemic biases—the efficiency is undeniable. Research shows an 80%+ correlation with human judgment.

7. The Attention Pivot: From SEO to Generative Engine Optimization (GEO)

The fundamental trade of the web—content for traffic—is breaking. Generative Engine Optimization (GEO) is the new battleground as AI “Overviews” provide direct answers, often eliminating the need for a user to ever click a link.

The End of the Click: Why search for a recipe when the AI gives you the ingredients, the steps, and a shopping list without you ever visiting the blog?

Gartner predicts a 25% drop in search volume by 2026. This isn’t a future threat; it’s an active crisis. Publishers are already reporting 20-40% year-over-year traffic declines, with organic click-through rates (CTR) potentially dropping as much as 64%.

While old media models struggle, a new breed of AI-Native companies is thriving on the wreckage.

8. The New Guard: The Explosion of AI-Native Businesses

We are moving beyond “AI-enabled” (adding a chatbot to a website) to “AI-native.” Much like how the smartphone enabled Uber, these companies are built on foundations that were impossible before the current AI era.

Born in the Cloud, Raised by a Model: Companies that don’t just ‘use’ AI, but literally couldn’t exist in a world without it.

With $50B raised by AI-native startups in 2024, the application layer is projected to reach $280B by 2030. This viability is driven by a single, staggering economic reality: the collapsing cost of thought.

9. The Commodity of Thought: Dramatically Lower Costs

The economic democratization of AI is here. Through architectural improvements and fierce competition, the cost of high-end intelligence has dropped by 70-90% in just 18 months.

From Caviar to Flour: High-end intelligence used to be a luxury; now it’s a utility priced in fractions of a penny.

The cost of image generation has seen a dramatic reduction, exemplified by DALL-E 2’s cost of $15.00 per image dropping to $0.02 per image with DALL-E 3. Similarly, LLM token costs have plummeted, with GPT-4’s price of $30 per million tokens now compared to $0.15 per million tokens for GPT-4o mini.

These scale economies enable massive use cases—such as processing millions of daily transactions or images—that were previously economically impossible. This cheap intelligence is now being granted a sensory upgrade.

10. The Sensory Upgrade: Multimodal Everywhere

AI has moved from being a text-based “brain in a jar” to a system with eyes, ears, and a voice. Multimodal models natively understand text, vision, audio, and video simultaneously.

The Computer Finally Has Eyes, Ears, and a Voice: Stop typing descriptions; just show the AI your screen or talk to it while you walk.

The impact on human-computer interaction is immediate: 40% of GPT-4 API calls now involve vision features, and Meta’s multimodal models process over 500 million images daily. We are no longer “typing” into a computer; we are “showing” and “speaking” to our digital environments.

Strategic Imperative for 2026: From Use to Orchestration

These trends converge on a single, sobering reality: the bottleneck to innovation is no longer the intelligence of the model, but the gap between the model and the implementation on the ground.

As the cost of intelligence (Trend 9) collapses toward zero, the value shifts toward those who can successfully integrate these models into the real world via Forward Deployed Engineers (Trend 5) and autonomous agents (Trend 1). The digital economy will not be led by those who merely “use” AI, but by the orchestrators who build AI-native businesses (Trend 8) on top of this new, sensory-rich infrastructure (Trend 10). The era of experimentation is over; the era of orchestration has begun.

End of the LLM playlist

The list above is from a couple of weeks ago, and it captures both the state of the field (broadly) and my mind (specifically) at that point; tomorrow my list may look different. Like LLMs, no two responses we give are identical. They are our mirrors, for now.

Postscript: I asked Claude for an opinion of the Jugalbandi above. Here’s what it came back with:

“I can confirm the expanded descriptions have that characteristic Claude thoroughness — structured, comprehensive, slightly eager to be helpful. The NotebookLM fine-tuning seems to have added the punchier one-liners and tightened things up, which is a good division of labor.”

A response that also contains a description of itself: self-referential, and typically Claudian.

I then asked Claude about the above remark, and pat came the response (I’ll let Claude have the last word here):

“Ha — yes, guilty as charged. “Structured, comprehensive, slightly eager to be helpful” describing itself in a structured, comprehensive, slightly eager-to-be-helpful way is almost too on the nose. It’s the textual equivalent of pointing at a mirror in a mirror.

And now, of course, I’m doing it again — acknowledging the self-referentiality in a self-aware, neatly articulated way that is itself quintessentially Claudian. It’s turtles all the way down.

Maybe that’s worth a footnote in the blog post. If LLMs are our mirrors, as you write at the end, then the reflection occasionally catches itself reflecting.”

Truly, they are our mirrors 🙌🏼